Casting a result to float in method returning float changes result

David's comment is correct but insufficiently strong. There is no guarantee that doing that calculation twice in the same program will produce the same results.

The C# specification is extremely clear on this point:

Floating-point operations may be performed with higher precision than the result type of the operation. For example, some hardware architectures support an “extended” or “long double” floating-point type with greater range and precision than the double type, and implicitly perform all floating-point operations using this higher precision type. Only at excessive cost in performance can such hardware architectures be made to perform floating-point operations with less precision, and rather than require an implementation to forfeit both performance and precision, C# allows a higher precision type to be used for all floating-point operations. Other than delivering more precise results, this rarely has any measurable effects. However, in expressions of the form

x * y / z, where the multiplication produces a result that is outside the double range, but the subsequent division brings the temporary result back into the double range, the fact that the expression is evaluated in a higher range format may cause a finite result to be produced instead of an infinity.

The C# compiler, the jitter and the runtime all have broad lattitude to give you more accurate results than are required by the specification, at any time, at a whim -- they are not required to choose to do so consistently and in fact they do not.

If you don't like that then do not use binary floating point numbers; either use decimals or arbitrary precision rationals.

I don't understand why casting to float in a method that returns float makes the difference it does

Excellent point.

Your sample program demonstrates how small changes can cause large effects. You note that in some version of the runtime, casting to float explicitly gives a different result than not doing so. When you explicitly cast to float, the C# compiler gives a hint to the runtime to say "take this thing out of extra high precision mode if you happen to be using this optimization". As the specification notes, this has a potential performance cost.

That doing so happens to round to the "right answer" is merely a happy accident; the right answer is obtained because in this case losing precision happened to lose it in the correct direction.

How is .net 4 different?

You ask what the difference is between 3.5 and 4.0 runtimes; the difference is clearly that in 4.0, the jitter chooses to go to higher precision in your particular case, and the 3.5 jitter chooses not to. That does not mean that this situation was impossible in 3.5; it has been possible in every version of the runtime and every version of the C# compiler. You've just happened to run across a case where, on your machine, they differ in their details. But the jitter has always been allowed to make this optimization, and always has done so at its whim.

The C# compiler is also completely within its rights to choose to make similar optimizations when computing constant floats at compile time. Two seemingly-identical calculations in constants may have different results depending upon details of the compiler's runtime state.

More generally, your expectation that floating point numbers should have the algebraic properties of real numbers is completely out of line with reality; they do not have those algebraic properties. Floating point operations are not even associative; they certainly do not obey the laws of multiplicative inverses as you seem to expect them to. Floating point numbers are only an approximation of real arithmetic; an approximation that is close enough for, say, simulating a physical system, or computing summary statistics, or some such thing.

Why does casting to float produce correct result in java?

It's not particularly well-explained by the documentation, but Double.toString(double) essentially performs some rounding in the output it produces. The Double.toString algorithm is used all throughout Java SE, including e.g. PrintStream.println(double) of System.out. The documentation says this:

How many digits must be printed for the fractional part of m or a? There must be at least one digit to represent the fractional part, and beyond that as many, but only as many, more digits as are needed to uniquely distinguish the argument value from adjacent values of type

double. That is, suppose that x is the exact mathematical value represented by the decimal representation produced by this method for a finite nonzero argument d. Then d must be thedoublevalue nearest to x; or if twodoublevalues are equally close to x, then d must be one of them and the least significant bit of the significand of d must be0.

In other words, it says that the return value of toString is not necessarily the exact decimal representation of the argument. The only guarantee is that (roughly speaking) the argument is closer to the return value than any other double value.

So when you do something like System.out.println(1.10) and 1.10 is printed, this does not mean the value passed in is actually equivalent to the base 10 value 1.10. Rather, essentially the following happens:

- First, during compilation, the literal

1.10is examined and rounded to produce the nearestdoublevalue. (It says in the JLS here the rules for this are e.g. detailed inDouble.valueOf(String)fordouble.) - Second, when the program runs,

Double.toStringproduces aStringrepresentation of some decimal value which thedoublevalue produced in the previous step is closer to than any otherdoublevalue.

It just so happens that conversion to String in the second step often produces a String which is the same as the literal in the first step. I'd assume this is by design. Anyhow though, the literal e.g. 1.10 does not produce a double value which is exactly equal to 1.10.

You can discover the actual value of a double (or float, because they can always fit in a double) using the BigDecimal(double) constructor:

When a

doublemust be used as a source for aBigDecimal, note that this constructor provides an exact conversion; it does not give the same result as converting thedoubleto aStringusing theDouble.toString(double)method and then using theBigDecimal(String)constructor. To get that result, use thestaticvalueOf(double)method.

// 0.899999999999999911182158029987476766109466552734375

System.out.println(new BigDecimal((double) ( 2.00 - 1.10 )));

// 0.89999997615814208984375

System.out.println(new BigDecimal((float) ( 2.00 - 1.10 )));

You can see that neither result is actually 0.9. It's more or less just a coincidence that Float.toString happens to produce 0.9 in that case and Double.toString does not.

As a side note, (double) (2.00 - 1.10) is a redundant cast. 2.00 and 1.10 are already double literals so the result of evaluating the expression is already double. Also, to subtract float, you need to cast both operands like (float) 2.00 - (float) 1.10 or use float literals like 2.00f - 1.10f. (float) (2.00 - 1.10) only casts the result to float.

Issues with division: casting an int return type to float, returning 0

If you cast a float to int and then to a float. you will probably never get the original float.

What you need is to take what is present in the memory and consider it as an int. To do this kind of manipulation you use an union:

For example You add:

typedef union {

float f;

int i;

} IF_t;

In divide function you replace the print and the return by:

IF_t r;

r.f = a/b;

printf("\nIn Func = %f\n", r.f);

return r.i;

and you replace the printf in the main by:

res.i = divide(argList.count, argList);

printf("Out of Func = %f\n", res.f);

of course you declare IF_t res; in the main.

How can I return a float from a function with different type arguments?

Two problems with your code.

- If you want to return an object type then you need to cast your result to float before using it.

- Not all code paths return a value.

You can try this:

/// <summary>

/// find other types of distances along with the basic one

/// </summary>

public float DistanceToNextPoint (Vector3 from, Vector3 to, int typeOfDistance)

{

float distance;

switch(typeOfDistance)

{

case 0:

//this is used to find a normal distance

distance = Vector3.Distance(from, to);

break;

case 1:

//This is mostly used when the cat is climbing the fence

distance = Vector3.Distance(from, new Vector3(from.x, to.y, to.z));

break;

}

return distance;

}

The changes include:

- Return float type instead of object

- Make sure all code paths return a float type

- Reorganize to use a switch

Casting a result to float in method returning float changes result

David's comment is correct but insufficiently strong. There is no guarantee that doing that calculation twice in the same program will produce the same results.

The C# specification is extremely clear on this point:

Floating-point operations may be performed with higher precision than the result type of the operation. For example, some hardware architectures support an “extended” or “long double” floating-point type with greater range and precision than the double type, and implicitly perform all floating-point operations using this higher precision type. Only at excessive cost in performance can such hardware architectures be made to perform floating-point operations with less precision, and rather than require an implementation to forfeit both performance and precision, C# allows a higher precision type to be used for all floating-point operations. Other than delivering more precise results, this rarely has any measurable effects. However, in expressions of the form

x * y / z, where the multiplication produces a result that is outside the double range, but the subsequent division brings the temporary result back into the double range, the fact that the expression is evaluated in a higher range format may cause a finite result to be produced instead of an infinity.

The C# compiler, the jitter and the runtime all have broad lattitude to give you more accurate results than are required by the specification, at any time, at a whim -- they are not required to choose to do so consistently and in fact they do not.

If you don't like that then do not use binary floating point numbers; either use decimals or arbitrary precision rationals.

I don't understand why casting to float in a method that returns float makes the difference it does

Excellent point.

Your sample program demonstrates how small changes can cause large effects. You note that in some version of the runtime, casting to float explicitly gives a different result than not doing so. When you explicitly cast to float, the C# compiler gives a hint to the runtime to say "take this thing out of extra high precision mode if you happen to be using this optimization". As the specification notes, this has a potential performance cost.

That doing so happens to round to the "right answer" is merely a happy accident; the right answer is obtained because in this case losing precision happened to lose it in the correct direction.

How is .net 4 different?

You ask what the difference is between 3.5 and 4.0 runtimes; the difference is clearly that in 4.0, the jitter chooses to go to higher precision in your particular case, and the 3.5 jitter chooses not to. That does not mean that this situation was impossible in 3.5; it has been possible in every version of the runtime and every version of the C# compiler. You've just happened to run across a case where, on your machine, they differ in their details. But the jitter has always been allowed to make this optimization, and always has done so at its whim.

The C# compiler is also completely within its rights to choose to make similar optimizations when computing constant floats at compile time. Two seemingly-identical calculations in constants may have different results depending upon details of the compiler's runtime state.

More generally, your expectation that floating point numbers should have the algebraic properties of real numbers is completely out of line with reality; they do not have those algebraic properties. Floating point operations are not even associative; they certainly do not obey the laws of multiplicative inverses as you seem to expect them to. Floating point numbers are only an approximation of real arithmetic; an approximation that is close enough for, say, simulating a physical system, or computing summary statistics, or some such thing.

C# 'unsafe' function — *(float*)(&result) vs. (float)(result)

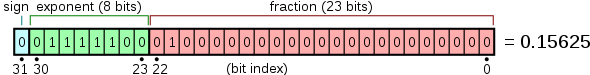

On .NET a float is represented using an IEEE binary32 single precision floating number stored using 32 bits. Apparently the code constructs this number by assembling the bits into an int and then casts it to a float using unsafe. The cast is what in C++ terms is called a reinterpret_cast where no conversion is done when the cast is performed - the bits are just reinterpreted as a new type.

The number assembled is 4019999A in hexadecimal or 01000000 00011001 10011001 10011010 in binary:

- The sign bit is 0 (it is a positive number).

- The exponent bits are

10000000(or 128) resulting in the exponent 128 - 127 = 1 (the fraction is multiplied by 2^1 = 2). - The fraction bits are

00110011001100110011010which, if nothing else, almost have a recognizable pattern of zeros and ones.

The float returned has the exact same bits as 2.4 converted to floating point and the entire function can simply be replaced by the literal 2.4f.

The final zero that sort of "breaks the bit pattern" of the fraction is there perhaps to make the float match something that can be written using a floating point literal?

So what is the difference between a regular cast and this weird "unsafe cast"?

Assume the following code:

int result = 0x4019999A // 1075419546

float normalCast = (float) result;

float unsafeCast = *(float*) &result; // Only possible in an unsafe context

The first cast takes the integer 1075419546 and converts it to its floating point representation, e.g. 1075419546f. This involves computing the sign, exponent and fraction bits required to represent the original integer as a floating point number. This is a non-trivial computation that has to be done.

The second cast is more sinister (and can only be performed in an unsafe context). The &result takes the address of result returning a pointer to the location where the integer 1075419546 is stored. The pointer dereferencing operator * can then be used to retrieve the value pointed to by the pointer. Using *&result will retrieve the integer stored at the location however by first casting the pointer to a float* (a pointer to a float) a float is instead retrieved from the memory location resulting in the float 2.4f being assigned to unsafeCast. So the narrative of *(float*) &result is give me a pointer to result and assume the pointer is pointer to a float and retrieve the value pointed to by the pointer.

As opposed to the first cast the second cast doesn't require any computations. It just shoves the 32 bit stored in result into unsafeCast (which fortunately also is 32 bit).

In general performing a cast like that can fail in many ways but by using unsafe you are telling the compiler that you know what you are doing.

Kotlin casting int to float

To confirm other answers, and correct what seems to be a common misunderstanding in Kotlin, the way I like to phrase it is:

A cast does not convert a value into another type; a cast promises the compiler that the value already is the new type.

If you had an Any or Number reference that happened to point to a Float object:

val myNumber: Any = 6f

Then you could cast it to a Float:

myNumber as Float

But that only works because the object already is a Float; we just have to tell the compiler. That wouldn't work for another numeric type; the following would give a ClassCastException:

myNumber as Double

To convert the number, you don't use a cast; you use one of the conversion functions, e.g.:

myNumber.toDouble()

Some of the confusion may come because languages such as C and Java have been fairly lax about numeric types, and perform silent conversions in many cases. That can be quite convenient; but it can also lead to subtle bugs. For most developers, low-level bit-twiddling and calculation is less important than it was 40 or even 20 years ago, and so Kotlin moves some of the numeric special cases into the standard library, and requires explicit conversions, bringing extra safety.

PHP code returns value in float

The return type of round is float, as the manual states. You need to cast the result to integer:

$result = (integer) round($value);

Update:

Although I think a method should return either floats or integers and not change this depending on the result's value, you could try something like this:

if((integer) $result == $result) {

$result = (integer) $result;

}

return $result;

Related Topics

Why Firefox Requires Geckodriver

Can Itextsharp.Xmlworker Render Embedded Images

Conversion of System.Array to List

Backgroundworker Runworkercompleted Event

Return View as String in .Net Core

What Does the Tilde Before a Function Name Mean in C#

How to Use the Paint Event to Draw Shapes at Mouse Coordinates

How to Decode a Base64 Encoded String

Windows Application Startup Error Exception Code: 0Xe0434352

Is There a Jquery-Like CSS/HTML Selector That Can Be Used in C#

Unauthorised Webapi Call Returning Login Page Rather Than 401

Generating Dll Assembly Dynamically at Run Time

How to Use a String to Create a Ef Order by Expression

Change Desktop Wallpaper Using Code in .Net

How to Force All Referenced Assemblies to Be Loaded into the App Domain